Hidden costs of AI projects are usually not the line items in the initial proposal.

Not because every vendor is trying to mislead you. Some are. Most are not. The bigger problem is that the number in the proposal usually covers the parts the vendor can see and control, model development, architecture, deployment, maybe some testing. The costs that end up hurting you later are the ones tied to your data, your systems, your users, your compliance requirements, and your organization’s ability to absorb what gets built.

That is where budgets get blown.

A 2025 survey of 372 enterprise organizations found that 80% miss their AI infrastructure forecasts by more than 25%, 24% miss by more than 50%, and 84% report more than a 6% hit to gross margin from AI costs. That is not bad luck. It is a sign that organizations are still underestimating what it really takes to get AI into production and keep it there.

PR Newswire

If you are evaluating an AI project, or comparing proposals, here is what usually gets left out.

Why the Hidden Costs of AI Projects Are So Often Missed

Most AI project proposals are scoped around what the vendor controls.

That means the proposal usually focuses on the visible technical work: model setup, workflows, orchestration, interface design, maybe integration assumptions, and a deployment plan. What gets priced less clearly are the items that depend on your environment. Those are harder to estimate early, so they either get minimized, left vague, or surface later as change requests.

The issue is not that those costs are unusual. The issue is that they are normal.

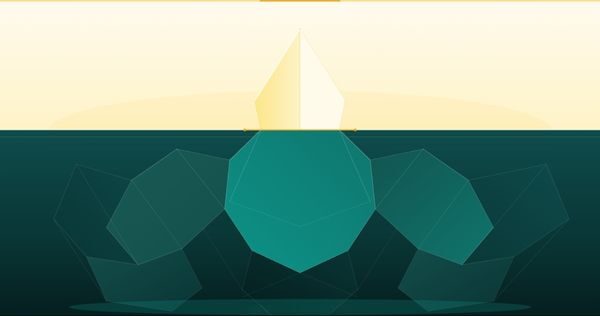

In many AI projects, the hidden costs are not side items. They are the real budget. That is why teams can sign a proposal that looks manageable and still end up with a project that costs materially more than expected. Your original draft framed this exactly right: the cost overruns usually come from what surrounds the build, not just the build itself.

The seven categories below account for most of the AI project cost overruns I see in practice.

Data Preparation

HIDDEN COST 1

This is the most common budget surprise in AI projects.

AI systems depend on data. Not abstractly. Very concretely. The data has to be available, usable, structured enough, clean enough, permissioned correctly, and connected to the workflow the system is supposed to support.

If your data is centralized, governed, well-labeled, and reasonably clean, great. You are already ahead of most organizations.

If your data is spread across systems, buried in PDFs, inconsistently named, missing key fields, or owned by nobody in particular, someone has to fix that before the AI system becomes useful. That work is not optional. It is part of the project whether the proposal acknowledges it or not. Your draft also notes that industry research often places data preparation at 30 to 40 percent of total AI project cost, and that late discovery of data issues is significantly more expensive than addressing them before build starts.

The question I would ask before any AI project starts is simple:

If we had to pull all the data this system needs into one clean, structured, usable dataset today, how long would that take and what would it cost?

If nobody can answer that, you already have your first budget risk.

Integration Work

HIDDEN COST 2

Every system your AI needs to touch is a project inside your project.

This is where a lot of supposedly straightforward AI projects get complicated fast. The moment the system needs to read from one platform, write to another, trigger an event somewhere else, respect access controls, handle failures, and work with legacy infrastructure, the project stops being just an AI build. It becomes an integration effort with AI inside it.

A simple API integration with a modern platform may be quick.

A messy integration with an older system may take weeks, involve other vendors, create security review work, and force process changes nobody anticipated when the proposal was written. Your draft nails this point: the biggest integration surprises are usually the ones nobody mapped in advance, which means they show up when the timeline is already set and the cost of change is highest.

If you want a more realistic AI budget, do not ask only what the model costs. Ask what the system has to connect to, how reliable those systems are, and who has to be involved to make those connections work.

Change Management and Training

HIDDEN COST 3

This one gets ignored constantly, and then everyone acts surprised when adoption is weak.

An AI system your team does not trust, understand, or know how to use will not create value. The technical build might work. The workflow might be sound. The answers might even be good. But if the people who are supposed to use it do not change behavior, the ROI never shows up.

That is not a technical failure. That is an implementation failure.

Change management includes training, documentation, workflow redesign, communication, user feedback loops, escalation paths, and support during the adoption curve. None of that is free. None of it tends to appear prominently in technical proposals. Your original draft says this clearly: six months later, organizations can end up with a working system that produces zero value because the organizational adoption work was never done.

If the AI touches a real workflow, then user behavior is part of the project cost.

It needs to be budgeted like it matters, because it does.

Compliance and Security Review

HIDDEN COST 4

In many environments, the AI system is not going to production until it clears compliance and security review.

That is true in healthcare, finance, government, transportation, and other regulated settings. It is also becoming more common in companies that are not traditionally regulated but still have internal requirements around vendor security, data handling, audit trails, accessibility, privacy, and model behavior.

This is where timelines quietly get wrecked.

If compliance review is treated as something you will deal with near launch, you are setting yourself up for delays and design changes at the most expensive stage of the project. Your draft is right that this is the worst possible time to discover gaps.

The right move is to scope compliance and security considerations early, while architecture decisions are still flexible and cheaper to change.

If you wait, you usually pay for it twice.

Ongoing Maintenance

HIDDEN COST 5

This is the cost that matters most for long-term AI ROI and gets the least respect in early planning.

AI systems are not static assets.

They drift. The world changes. User behavior changes. Edge cases show up. Regulations move. Underlying models change. Foundation model providers update behavior. Data distributions shift. What worked on launch day may not stay sharp without ongoing monitoring and adjustment.

That is not a defect. It is just how production AI works.

Your draft recommends planning for 15 to 25 percent of the initial build cost annually for maintenance, monitoring, and periodic retraining. That is a useful rule of thumb because it forces the right mindset: maintenance is not optional overhead, it is part of the operating model.

If the budget assumes the system gets built once and then mostly takes care of itself, the budget is wrong.

Infrastructure and Compute

HIDDEN COST 6

This category varies more than people expect.

If you are using foundation models through APIs at moderate volumes, compute may be relatively manageable. If you are running heavier workloads, serving more users, handling spikes, or using your own infrastructure, the forecasting gets harder fast.

The part many teams underestimate is not just baseline usage. It is peak usage.

A system that looks affordable under normal conditions may behave very differently during a launch, a seasonal spike, a customer event, or an operational disruption. If the infrastructure is not designed and budgeted for peak load, cost surprises show up quickly. Mavvrik’s 2025 report also points to a broader cost surface than most teams assume, with data platforms and network access ranking ahead of LLM token costs as sources of unexpected AI spend.

That is an important point.

A lot of teams fixate on model cost and miss the surrounding stack.

Storage, logging, orchestration, monitoring, and data movement do not always look dramatic on their own, but together they can materially change the economics of a production system.

The Cost of Getting It Wrong

HIDDEN COST 7

This is the most expensive cost on the list, and it never appears in the proposal.

If the AI system is scoped incorrectly, built for the wrong problem, or deployed into an organization that is not ready for it, the cost is not just the build. It is the build cost, the restart cost, the opportunity cost, and the trust cost.

That last one matters more than people think.

A visible AI project that fails does not just burn budget. It often makes the organization more skeptical of the next one, even if the next one is better chosen and better designed.

Your draft makes this point well: the scoping work done before build is the most important investment in the project because it reduces the chance of building the wrong thing in the first place.

I have seen teams spend six to twelve months on a project that was never going to create value because the original problem definition was wrong. That kind of failure is expensive in every direction.

The cheapest AI project is often the one you do not build until the problem is clear.

What Smart Buyers Do Differently

The strongest AI buyers do not just compare vendor proposals.

They pressure-test the assumptions underneath them.

They ask:

- What data work is implied here but not priced clearly?

- What integrations are assumed to be simple?

- What training and workflow changes are required for adoption?

- What compliance or security reviews are likely to surface?

- Who owns the system after launch?

- What happens if usage doubles or spikes?

- What does failure look like, and what would restarting cost?

Those are better questions than “What is your hourly rate?” or “Can you do it cheaper?”

Cheaper is not the same as lower cost.

Not in AI.

Before You Commit

If you are trying to budget responsibly for an AI project, do not stop at the proposal.

Look at the full operating picture.

Look at the data work.

Look at the integration burden.

Look at adoption.

Look at governance.

Look at maintenance.

Look at infrastructure.

Look at the downside cost of getting the scope wrong.

Our post on what a production AI agent actually costs covers the build-cost ranges by project type. This post is about everything around those ranges that tends to surprise people. Taken together, they give you a much more honest view of what you are really committing to before a contract gets signed.

And if you have not done a formal AI readiness assessment yet, that is the right starting point before any cost conversation. The readiness gaps it surfaces are usually the same hidden costs that show up later, except early enough that they are much cheaper to address. That handoff is already built into your draft and it is the right way to close the article without turning it into a hard sell.